As a long time mobile and server side engineer I have been involved in many different types of projects over the years. Some small, and some large, but all with one recurring trend; the mobile clients need to consume data from a server to perform a task. Sometimes this data being consumed is small, and other times the application needs to continuously poll or be notified of new data to keep the application up to date it real time. So far, this is probably nothing out of the ordinary, right? However, what about taking these requirements and running them now at scale across different mobile platforms on cellular networks around the globe. All of a sudden something that used to “business as usual” can now create a lot of network overhead on your servers and can leave users waiting on high network latency while their applications struggle to connect with the servers. And that is why I wanted to write this article, to discuss the mobile connection problem at scale and to share some proven techniques that I have seen used to cut down mobile connection time down in some cases up to 45%.

NOTE: This article provides technical and architectural guidance to optimizing your mobile connections at scale, but does not provide technical implementation details on how to implement these solutions. Implementations details are often best left to the environment authors based upon their current constraints.

The Problem: Networking at Scale with Mobile 📡 📱

As described above, your platform may have multiple mobile clients depending upon your product and ecosystem. These mobile clients may be fairly lightweight too; making only a few unique authenticated connections, with a very optimized payload. This is probably the best case scenario. Now, let’s take that best case scenario and scale your mobile clients up to 100,000 unique sessions daily across your ecosystem - for many platforms this may be even be a small fraction of the user base. Then, let’s take each unique session and project that each session is making 10 unique connections during their daily use. Which, in mobile, is most likely a very low number. All of a sudden you are in a situation where your mobile ecosystem is adding 1,000,000 connections to your infrastructure daily. So, where can you optimized this traffic and how do you know if you are seeing benefits from your optimization? This is the problem that article aims to examine.

Solutions: Isolate Network Connections and Utilize Mobiles Capability 🚦📱

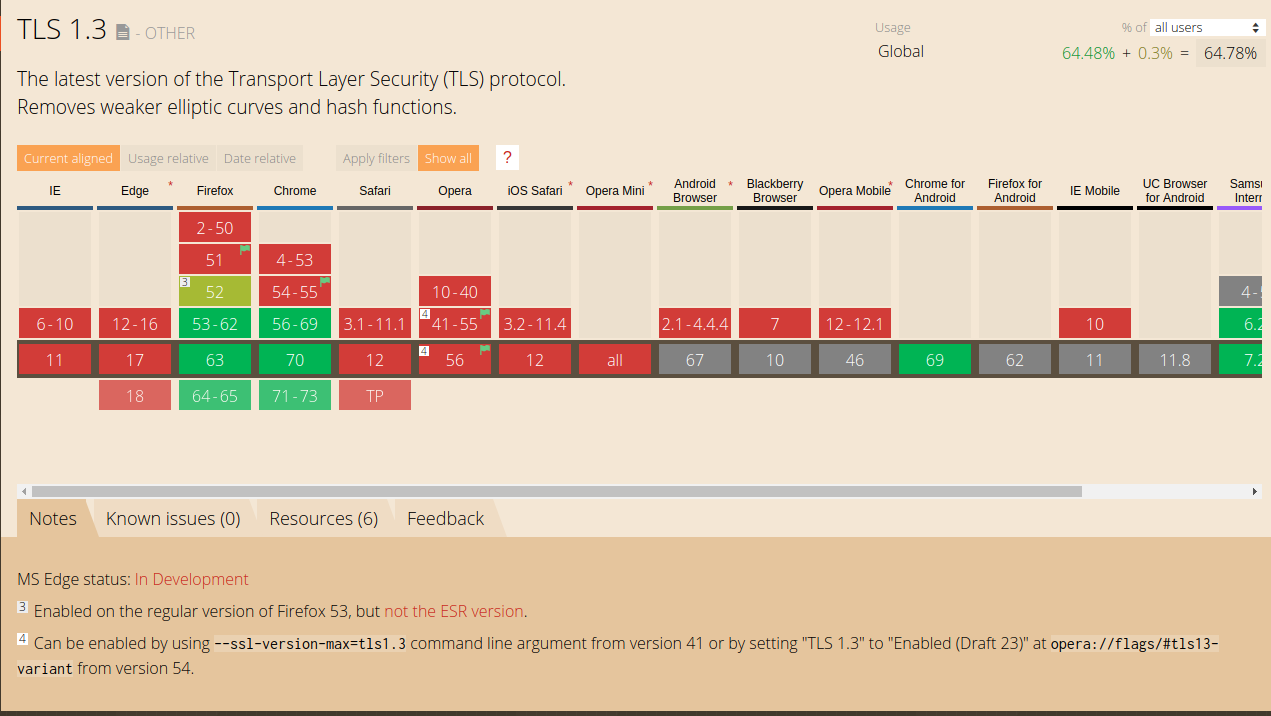

The first approach to mobile optimization is to isolate incoming connections by their environment to maximize the optimization opportunity available in mobile. When you are running at scale, web and mobile based connections should not be routed through the same infrastructure. Mobile based connections have more opportunity for optimizations than web based connections do. For example, let’s say you want to utilize 0-RTT with TLS 1.3 to between any client and the load balancer. The currently issue is that most web browsers do not support TLS 1.3 but mobile clients can be configured to support TLS 1.3. So, what do you do? Do you attempt to support both types of traffic on your load balancers and risk degraded performance for your mobile traffic? Nope. At this time the absolute best option is to isolate your web and mobile based traffic to support their specific needs. Isolating this traffic also provides your mobile environment with the ability to configure TCP connections with options often not available on the web, but more on that in a minute.

Now that your mobile connections are isolated it’s time to benchmark each step in your connection state to see which parts need the most attention. I would recommend taking multiple measurements with TCPDump on each step of connection and then averaging the sum of each part. For example, measure the time to set up the connection and to perform the SSL handshake, to exchange of application data, and lastly, tear down the connection.

With measurements in hand it is time to start making mobile optimizations to your connections. A few optimizations that I would evaluate to make first would be TLS 1.3, MPTCP, congestion window control, and the optimization of send and receive buffers. All of these decisions need to be based on what makes the most sense for your mobile needs, but the first option with the largest impact would be TLS 1.3. I briefly mentioned the benefits of using TLS 1.3 above (0-RTT) but let’s really discuss what this can do for you today if you are using TLS 1.2 as your transport layer security. Three major benefits with TLS 1.3 is connection setup and handshake time, less packets being transmitted for one connection, and the overall increased security on your connection. With the previous measurements between the connection setup (SYN, SYN-ACK, ACK) and the SSL handshake is where the benefits for TLS 1.3 will be realized. TLS 1.3 provides the ability to present pre-shared keys once one connection has previously established to save on the client and server exchange, the connection setup, and ultimately get to the SSL handshake faster. In some cases the time savings can be measured up to 45%. This would be a big win for performance and security if your environment can support it. See more here. One thing to consider though is the potential cost of using 0-RTT and if it opens up your connections to replay attacks. See more here.

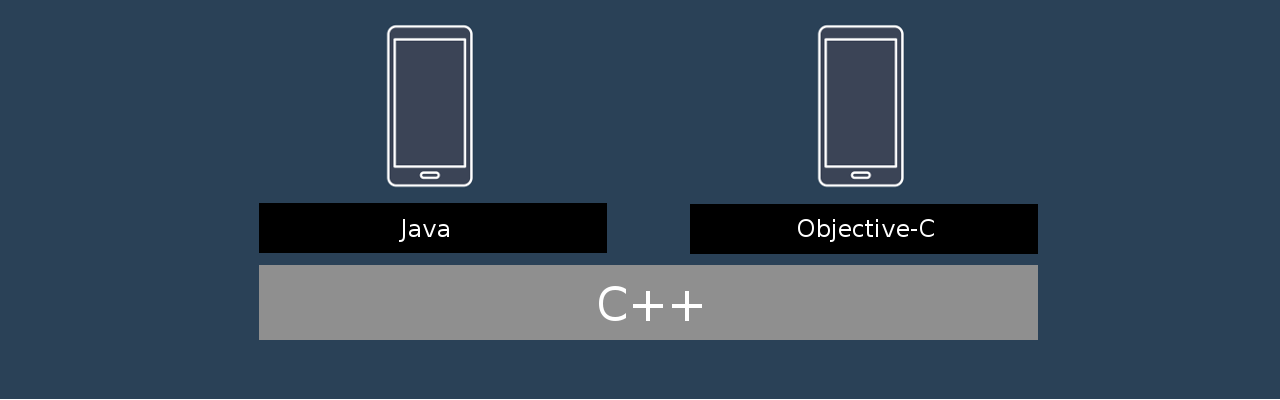

Next, let’s discuss congestion windows and buffer control. Congestion window and TCP buffer control is all about optimizing the transmission of application data and messages that is sent after the SSL handshake is performed. The idea is that if an application can optimize what size the data packets are coming over to the server and how long the congestion window stays open then few packets have to be sent as a result. Saving the connection time over the wire. From an implementation standpoint, congestion window and buffer control can be setup between an Android and an iOS client by developing a C++ shared library that controls the socket connection natively. Each platform would then have a Java and Objective-C based API that calls into this library to delegate how the connection is controlled how large the application data is going to the server. There are many examples of companies and VPN providers out there today doing this like Facebook and F5 Networks. From a performance standpoint, each tweak you make to how the data is sent to the server needs to be measured as this process can be have varying results depending on how the congestion window and the send buffers are tweaked. I would recommend tweaking one variable at a time and then measure; not multiple variables.

Lastly let’s talk about the Multi-Path TCP kernel. MPTCP can be used to optimize your mobile traffic by spreading out how traffic flows from your mobile application to the server. MPTCP in some cases provides faster transmission and increased support for connections experiencing lot’s of retransmission and failures. When measuring the gains from MPTCP, the gains should be evaluated from the entire connection, end-to-end, as multi-path changes the complete strategy for making a connection at the TCP level. iOS provides full support for implementing MPTCP your project. On Android though, MPTCP is a bit more tricky due to the carriers and kernels support it across all versions. If you have the ability to utilize MPTCP across your mobile platform then it may be worth measuring the time savings you get from MPTCP as opposed to from controlling your congestion windows and the connection TCP buffers to decide which implementation technique makes the most sense. I would not attempt to use all three techniques at the same time.

In Summary ⌛️

In summary mobile networking is something that will always continue to improve as there will always be a demand to push the limits of connectivity faster and faster. I am happy to have been apart of projects where I have seen the techniques described in the article realize mobility performance improvements in very complex networks. It makes me very excited to see how far mobile performance can be pushed and what the next big leap in mobile network performance at scale will be.

Thank you very much for reading, if you have any questions, comments, war stories, or concerns relating to this topic, please leave a comment and I will get back to you as soon as I am able to.

References:

- Facebook Zero Protocol: https://code.fb.com/android/building-zero-protocol-for-fast-secure-mobile-connections/

- iOS MPTCP: https://support.apple.com/en-us/HT201373

- Android MPTCP: https://multipath-tcp.org/pmwiki.php/Users/Android

- Browser Support TLS 1.3: https://caniuse.com/#feat=tls1-3

- IETF TLS 1.3: https://tools.ietf.org/html/draft-ietf-tls-tls13-28#section-2.3

- IETF 0-RTT: https://tools.ietf.org/id/draft-thomson-tls-0rtt-and-certs-00.html

- MPTCP: https://multipath-tcp.org/pmwiki.php/Users/Android

- OpenSSL 1.1.1: https://www.agnosticdev.com/blog-entry/network-security/openssl-111-lts